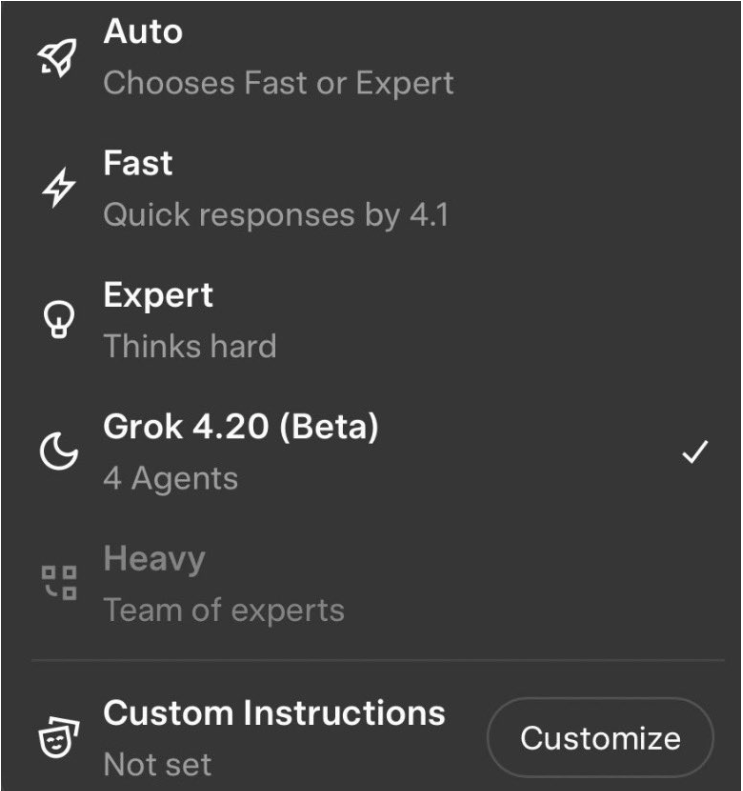

XAI Launches Grok 4.20 , 4 AI Agents Collaborating. Estimated ELO 1505-1535

xAI has launched Grok 4.20 live in Beta.

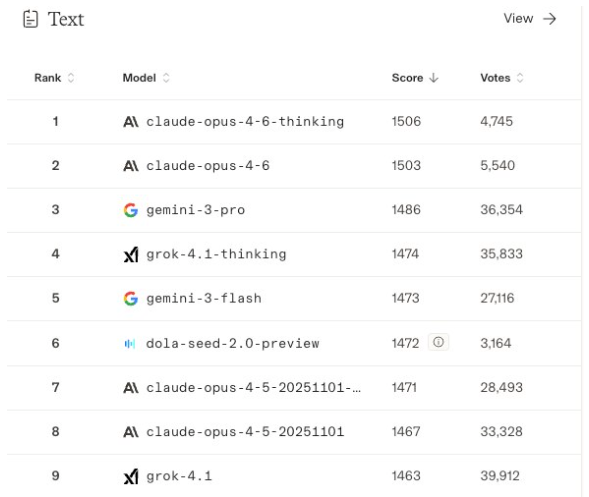

Estimated Arena (LMArena) Elo for Grok 4.20: ~1505–1535 provisional (Grok 4.20 analysis).

Reasoning: Grok 4.1 Thinking is already at 1483. The multi-agent council + extra inference-time compute + engineering/coding gains + hallucination reduction typically add 20–60 Elo points in crowd-sourced arenas (see historical jumps from single → agentic systems). Once fully ranked it is very likely to take #1 overall. No official provisional ranking exists yet because it is still beta/internal.

It is not one AI. It is four AI Agents.

xAI built a 4 Agents system – four specialized AI agents that think in parallel and debate each other in real-time before giving you an answer.

This isn’t a single monolithic model but a multi-agent system where multiple replicas (or specifically four specialized agents named Grok, Harper, Benjamin, and Lucas) deliberate and debate in parallel before generating a response, aiming to produce more thoughtful, well-rounded outputs.

It has good but limited set of benchmarks so far. Great Safety Overfit tests, ranking #2 on ForecastBench, and delivering strong results in live trading competitions like Alpha Arena (with +34.59% returns where competitors posted losses).

User reviews are mostly positive but mixed. It is fast with answer and is has quick convergence on high-quality answers. It is a reliable research and coding assistant.

What’s new:

* 4-agent collaboration (think → debate → consensus)

* 256K context window (up to 2M)

* Native multimodal (text + image + video)

* Trained on 200K GPUs (Colossus supercluster)

* Only AI that was profitable in live stock trading competitions

This was the trading done by Grok 4.20 in December 2025.

Grok 4.20 (Beta) is xAI’s latest major iteration in the Grok 4 series, currently in a limited/internal beta rollout as of mid-February 2026 (announced and beginning deployment around Feb 17). It is available to SuperGrok (~$30/mo) and X Premium+ users, with broader rollout across apps and the API expected imminently. Elon Musk and xAI have confirmed details via X, and early checkpoints have been tested publicly.

Training Details

Grok 4.20 was trained on xAI’s Colossus supercluster (hundreds of thousands of GPUs, with ongoing scaling toward 1M+). Training faced delays of a few weeks in late January 2026 due to extreme cold weather and power-line incidents from construction, pushing the largest model variant’s final training past Jan 30.

It builds directly on the Grok 4 / 4.1 pre-training + post-training recipe:

Massive pre-training on public internet data, third-party datasets, user/contractor data, and internally generated synthetic data (with heavy filtering, deduplication, and safety classification).

Heavy post-training via scaled reinforcement learning (RL), including verifiable rewards, human feedback, model-as-judge (using frontier agentic models as reward models), and supervised fine-tuning.

Unique to 4.20, it has extensive training for multi-agent coordination — the model is not a single forward pass but a council of 4 specialized agent replicas (often referred to internally or in demos as Grok + Harper + Benjamin + Lucas) that collaborate in real time on every complex query. This includes debate, fact-checking, hypothesis generation, and verification loops.

Heavy ingestion of real-time X (Twitter) firehose data (~68M English tweets/day) for low-latency sentiment, events, and world-model updates. This gives a structural edge in real-world, fast-moving domains like trading and news.

No official parameter count has been released (Grok 4 was rumored ~3T MoE; Grok 5 rumors point to ~6T). Context window is at least 2M tokens in agentic/tool-use modes (inherited/enhanced from Grok 4.1 Fast).

Leaderboard Rankings and Provisional Arena Elo

Grok 4.20-specific results (early checkpoints/preview):

#1 on Alpha Arena Season 1.5 (live stock-trading competition, Jan 2026): Turned $10k into ~$11k–$13.5k (+10–12% baseline, up to +34–47% in optimized configs). Only profitable model; 4 Grok 4.20 variants took 4 of top 6 spots while OpenAI/Google rivals finished in the red. Used real-time X sentiment + price signals (1–5 min horizon).

#2 on ForecastBench (global AI forecasting leaderboard): Outperformed GPT-5, Gemini 3 Pro, Claude Opus 4.5, and closed gap to elite human superforecasters.

Strong early signals in coding/math/theorem-proving, multimodal, and open-ended engineering.

Technical Specifications & Improvements vs Grok 4.1

Grok 4.1 (Nov 2025) key traits:

Major focus on real-world usability, personality coherence, emotional/collaborative intelligence.

65% hallucination reduction (from ~12% → ~4.2%).

Large-scale RL on non-verifiable rewards (style, helpfulness, alignment) using agentic reward models.

Strong multimodal (text + images; video incoming), native tool use, 2M context in Fast variant.

Arena dominance and 64.78% win rate vs prior production Grok.

What changed in 4.20 (biggest differences):

Core architecture shift — Single-model (even with thinking) → 4-agent multi-agent collaboration system (council of replicas that debate, specialize, fact-check, and synthesize on every turn). This is the headline breakthrough — not a normal model release.

Dramatically better at open-ended engineering questions, complex coding, theorem-proving, and real-world agentic tasks

(Musk said it is starting to correctly answer open-ended engineering questions.

Further hallucination reduction via built-in agent fact-checking and real-time X grounding.

Superior multimodal (explicit video understanding/generation improvements) and real-time data processing.

Enhanced trading/financial reasoning and low-latency world modeling (proven in Alpha Arena).

More inference-time compute and parallel hypothesis exploration.

Yes, more reinforcement learning — especially for agent orchestration, multi-turn stability, and verifiable rewards in collaborative settings. The RL infrastructure from Grok 4/4.1 was scaled further for the council mechanism.

4.1 was about making the single model more pleasant/reliable/useful. 4.20 is about system-level intelligence via explicit multi-agent scaffolding on top of an even stronger base.

The Best “Go Paid” Deal on Substack! You Get REAL Stuff!!

Go paid at the $5 a month level, and we will send you both the PDF and e-Pub versions of Etienne’s new book: To See the Cage Is to Leave It - 25 Techniques the Few Use to Control the Many and a coupon code for 10% off anything in the https://artofliberty.org/store/.

Go paid at the $50 a year level, and we will send you a free paperback edition of Etienne’s new book: To See the Cage Is to Leave It - 25 Techniques the Few Use to Control the Many OR “Government” - The Biggest Scam in History… Exposed! OR a 64GB Liberator flash drive if you live in the US. If you are international, we will give you a $10 credit towards shipping if you agree to pay the remainder.

Support us at the $250 Founding Member Level and get a signed high-resolution hardcover of “Government” - The Biggest Scam in History... Exposed! + Liberator flash drive + a signed high-resolution hardcover of Etienne’s new book: To See the Cage Is to Leave It - 25 Techniques the Few Use to Control the Many + everything else in our “Everything Bundle” of the best in voluntaryist thought delivered domestically. International pays shipping. Our only option for signed copies besides catching Etienne @ an event.

Comments ()